I am fascinated by the happenings in the LLM/Chatbot/"AI" landscape. I studied an "AI" unit at Uni more than 30 years ago, we called it "Artificial Neural Networks" in those days, but the underlying premise remains. I'm sure the tech is WAY more complicated than the simple models and training data we used. Heh, for my final year project I wrote a program in C, in DOS, that was running on a "luggable" computer (not quite a laptop, but a computer with battery you could carry around and use), that had a sound interface and a microphone. There was a small database of words, students would walk around with the computer and get volunteers to say the words that appeared on the screen and it would capture the audio - to be used for training data. I managed to put a pretty oscilloscope graphic on the screen as well - to make it fun for the person talking - as they pressed the space bar, said the word, pressed the space bar for the next word. After twenty or so words it would thank them and save all the sound files to folders to be later used as training and test data for speech to text. Very early stuff.

Anyway, it's fun to see it have come so far.

My most recent vibe code, with Claude who is my current favourite vibe coding chatbot, "we" wrote a python script to analyse music. Let me explain.

I have been mixing songs from my local church for a few years now, it's such a great way of practising and improving. Both as a musician and as a mixer/producer of a mixed song. I have mixed more than 60 songs over the last two years and I thought I'd take my favourite 12 from 2025 and make a playlist. But each mix has a different feel, as my ear developed, as my skills developed, so the 12 songs across 2025 have quite a different feel, even though they are all similar in style. Sure, there are differences - we aren't playing the same song over and over! - but I felt that as an "album" of 12 songs there should be a certain level of consistency.

I think you know where this is going

A basic consistency check is your LUFs and LRA. You don't want to be constantly turning the volume knob up and down between songs, or even within songs - so an overall perceived loudness (LUFs) and a measure of how much dynamics there are in a song (LRA) is important both for the listener and for a streaming service. Streaming services will adjust your loudness if you don't hit their metric.

In Reaper DAW I can easily track LUFs and LRA - but I was interested if I could get other sonic characteristics as a metric for comparison in Reaper.

In typical Chatbot style there was lots of talk about integrating various sonic libraries into Reaper (it has great programmability) blah blah, but chatbots just don't think outside the box. When it was talking about calling some python external to Reaper I said - "can we just do all this outside of Reaper?" and the answer was basically "yes that would be easy." Sheesh.

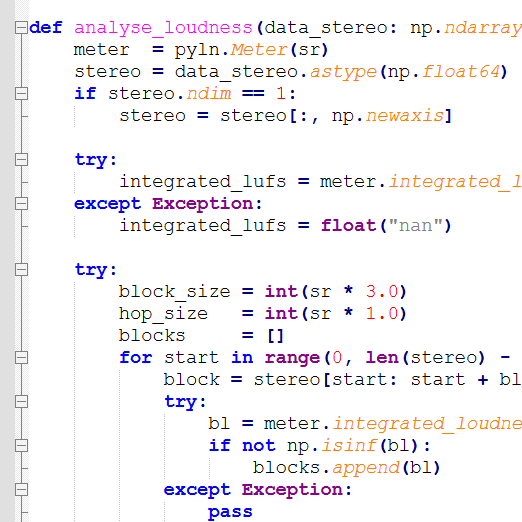

Suffice to say I now have an interesting python script that analyses a group of songs for an interesting range of metrics, looking for outliers and suggesting what needs to be changed and how it can be changed.

So much going on there. First thoughts are "C'mon JAW, are you trying to make all music hit the same bland boring metric?" No. And a little bit yes. Who else here turns down the start of "Time" on Dark Side of the Moon because they don't need to have extremely loud alarm clocks scaring them. I get it, the artistry, the "scare me into action because every year is getting shorter", but I don't need it on the 100th, 1000th listen. Or the first whalesong note in "Echos".

So I'm going to say yes, the LUFs should be close to each other (especially since the streamers will change them if you don't behave yourself) and the dynamics shouldn't be crazy wide. You don't want a listener turning up a song at the start because it is a quiet guitar intro only to turn it down again when the verse kicks in. At the same time you don't want your song to be so compressed that there is no dynamics at all (I was guilty of that on a few).

However for spectral data broken into bands, Sub - Bass - LoMid - Mid - Presence - Air, I do feel like there should be a certain amount of homogeneity across the board and yes, I did tweak some outliers. Frequency rolloff point at 85% is also another interesting metric. I don't want there to be some songs that sound like an AM radio and some that sound like you are standing outside of a rave party. Particularly when the recordings are from the same venue, the same style of music and the same groups of musos appearing in the songs.

From a vibe coding point of view - it s so good to do, fun and super effective and efficient. From an art point of view - I like consistency, I like having my mixes compared with each other to see where I went a bit wild in places. I have templates and recurring processes, but I mix to the song so they are always going to get a different vibe. From an album point of view I think reeling in some of the outliers is a good idea.

If you want to try it out, get it here.